HPC

“Compute refers to applications and workloads that require a great deal of computation, necessitating sufficient resources to handle these computation demands in an efficient manner.”

Compute-intensive applications stand in contrast to data-intensive applications, which typically handle large volumes of data and as a result place a greater demand on input/output and data manipulation tasks.

The exploration and production of natural resources by companies both large and small is a significant driver of HPC systems in the industry. Exploration and production (E&P) companies use high-performance computing to interpret seismic data to locate new deposits and calculate the yields. Engineers and designers use clusters for modeling, design, and collaboration of plant and production design automation.

High-performance computing has been previously available to only the largest E&P production companies due to cost limitations. Technological advances and lower entry costs now allow for small and mid-size companies to have access to cluster technology. These organizations can now invest a modest amount for a 2 – 64 node cluster with very high performance allowing them to compete on a higher level and increase efficiency.

Oil and gas exploration begins with the seismic geophysical surveying process. E&P companies use HPC systems to find new reserves of hydrocarbons by creating models from seismic data. Utilizing high-performance computer systems, these models are created with ever-increasing detail to produce high-resolution subsurface maps of potential oil fields.

Reservoir simulation is a tool used to measure how much oil and gas can be produced from a field over a period of time. One major constraint has been the time required to create these complex simulations. HPC systems minimize the time in processing massive amounts of data and performing the simulations.

Increased success and efficiency in exploration and production have been attributed to 3D visualization. A robust system that incorporates computing, rendering, and data management is a basic requirement for an effective visualization tool.

An HPC system is an ideal platform for running financial-related applications. Financial applications require the processing of huge volumes of data. Financial simulations are necessary tools for determining estimated risk and formulating the company’s best course of action. An HPC system provides valuable assistance in risk analysis, market analysis, high-frequency and securities trading, securities valuation, and other related areas of finance.

Increased regulations are placing more pressure on companies to build management and financial accountability into their workflow. Regulatory acts and standards such as Sarbanes-Oxley, the Casualty Actuarial Society (CAS), and the COSO Integrated Framework have helped define the industry. A number of HPC applications assist companies in meeting the requirements of these acts and standards.

In addition to governmental regulatory compliance issues, many companies use client behavior patterns to increase sales through the use of high-performance computing. By closely examining consumer habits both online and retail establishments can cater their services and wares to meet the needs of their base via predictive analytics. This insight can assist with forecasting manufacturing and inventory levels, seasonal buying patterns, and even the mundane such as color preferences. These predictive analytics also assist in uncovering the patterns of fraudulent transactions.

The impact of high-performance computing cannot be understated for its assistance in public safety matters and is deployed in local, regional, and national applications. Public safety is broad in scope and includes such practices as healthcare, first responders (EMS, fire, and law enforcement), weather patterns and warnings, transportation, corporate and educational campuses, energy, and disaster preparedness and recovery.

Both historical data and current data collected and monitored by IoT devices provide invaluable insight providing these entities with a perspective enhanced by the use of HPC and visualization. Visualizing patterns that otherwise would be time-consuming, cumbersome, or in many cases impossible with traditional computing methods are now routinely accomplished quickly and easily limited only by the speed and parameters of the data ingestion.

Life sciences benefit greatly from HPC, from genomics to drug testing. First responders gain significant advantages by providing them with historical patterns and predictive analytics further enhancing greater protection for the public as well as themselves. The same applies to campus security for employees, students, and faculty. With global warming an escalating reality, weather patterns, disaster preparedness, and relief become more critical and can be adjudicated with high-performance computing.

Engineering disciplines, including industry, academia, and government, comprise one of the largest segments in the high-performance computing market. Engineers benefit from high-performance clusters in nearly all areas by creating computational solutions to practical engineering problems.

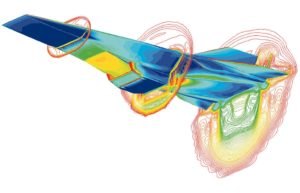

Computer simulations are a numbers game in which digital models are pitted against simulated physics to determine the model’s viability. Many calculations have to be solved for a simulation to be valid, making simulation a prime candidate for HPC. Practitioners and researchers are applying the power of HPC to a wide range of uses such as fluid flow, structural mechanics, design, testing, simulations, and data mining. The electronics industry and fluid dynamics are arguably two of the biggest users of HPC systems in this market.

Engineers use clusters to test, simulate and evaluate products and designs ranging from the simplest items like snack packaging to the complex such as aerospace.. Structural engineers design and test architectural plans and structural validity of buildings, homes, and other civil structures. Fluid dynamics and process engineering are two other areas where clusters are heavily used.

The study of life sciences drives improvement and enhances the well-being of our society. Life science generally refers to practices that make use of informatics to delve deeper into the secrets of living organisms. The deployment of high-performance computing in these endeavors helps overcome demanding analytics, modeling, and simulation.

Commonly used by pharmaceutical, healthcare, and biotechnology companies HPC accelerates the effectiveness of new discoveries, improves the effectiveness of clinical treatments, and provides a foundation for these entities to stay competitive. The limitations imposed by molecular modeling, simulation, genomics, sequencing, and data management can be overcome by performing these tasks in high-performance computing computational environments.

Bioinformatics requires substantial computing, networking, storage, and efficient data management. There are no ‘one one size fits all’ solutions and adapting to and deploying high-performance computing to ensure your goals are met requires a partner with experience, skills, and the appropriate tools to ensure a satisfactory and scalable solution.

The continued growth of HPC in academia is driven by the use of high-performance computing by governmental and corporate entities. Academic research is not limited to specific fields and often compels continued research by corporations. Computational sciences are broad in scope and encompass many disciplines.

Academics have no bounds and research is conducted in diverse fields such as:

- aerodynamics

- health care

- quantum mechanics

- molecular modeling

- climatology

- food sciences

- polymers

- cryptology

- exploration of both terrestrial and extraterrestrial phenomenon